There are two ways to collect metrics for technologies within Datadog; One way is with custom checks, the other is integrations. Integrations are pre-build code modules with the purpose of collecting data from commonly used applications. There is a wide range of applications that currently have integrations. However, what happens when you have a custom application or unique systems not supported by an integration available on the marketplace? The answer is custom checks. Custom checks are custom python scripts that poll data either from logs, custom apis and other data sources and send the collected data to Datadog.

At first, when I was creating custom checks, I spent most of the time figuring out how to develop them correctly. This was because I didn’t know how to set up the environment to effectively develop a custom check. By the end of developing my first custom check, I discovered several practices that make developing custom checks significantly easier and faster. I will be detailing them below so you can hit the ground running when developing your own custom checks for Datadog.

Setting up a development environment

The first step with creating a custom agent check is ensuring you have access to a development environment. The two components that make up a good development environment are; a Datadog agent installed locally and a compiler for testing python scripts.

When you deploy the Datadog agent for testing, install the agent in a location where you can both: write the python script and have access to the data you want to collect. Sometimes that means installing two agents, one locally and the other on a host that can access the data.

The next step is selecting a python editor you want to use. I recommend visual studio code for an editor, but feel free to use whatever compiler you prefer as long as it can read Python and YAML. When you install the Datadog agent an internal python environment is bundled within the agent. This environment is used to run custom checks and integrations. I highly recommend that you point your compiler to run off the Datadog agent python environment.

To change the python interpreter in visual studio code:

- View -> command palette -> type ‘Python: select interpreter’ -> Click the ‘+’ button to add a new interpreter path

The path for the Datadog agents python instance is as follows:

- Windows: C:\Program Files\Datadog\Datadog Agent\embedded3\python.exe

- Mac or Linux: /opt/datadog-agent/embedded/bin/python3.8

Using the Datadog agents python environment vastly simplifies checking if a python library is present within the Datadog python environment and using custom python libraries such as the Agent check library.

At this point, you should have a python compiler using the agent's embedded python environment, a Datadog agent installed locally, and optionally another Datadog agent that can collect metric data from your technology or application. With this setup, you have the foundation of a suitable environment for developing Datadog custom checks.

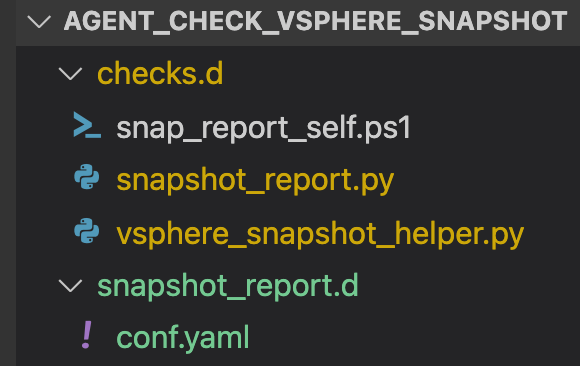

Creating the Custom Check Directory

There are two main directories in an agent check. First check.d, this directory contains all of your python scripts and any additional documents required by the agent.

- Ex. My sample custom check uses a PowerShell script to collect user information about vsphere snapshots.

The second folder resides within your agents’ conf.d directory and should have the same name as your main python script followed by a ‘.d’. This directory contains the ‘conf.yaml’ file.

Creating custom check files

When I start developing a custom check, I create three files, the helper python file, the main python file, and the conf.yaml. Together these three files make up a file structure that simplifies the development of custom checks.

The first file is the helper python file. The purpose of the helper python file is to store all custom functions used to collect data in your main python file. I use this structure since it makes it easy to test your functions. When developing the custom check, I build out the helper functions to collect the code before making the main python file. The only best practice recommendation would be to name the file the same as your main python script but ending with helper.py, making it easy to distinguish multiple helper files.

The following file is the python agent check script. Datadog has excellent documentation about the general structure of the main file. Something to keep in mind is that running a typical pip install to add missing modules won’t work for agents' python environment. You have to run a custom agent command. I recommend using a try/except statement to account for this. Here is an example of the command and the try/except statement:

The conf.yaml is a straightforward file to create. It has two sections, init and instance. You use the init section to configure global variables. If you have variables that you want to use in each instance of your custom check, you will set them here. The instance section holds variables of a single instance of the custom check. You can have multiple instance sections within one conf.yaml file. To grab the values within your primary yaml file, use self.instance.get(‘key_name”) to pull the variable values for the current instance.

You should now be able to start developing your own custom agent check. With the best practices for configuring your agent installation, setting up your code editor, and creating a file structure. You now have a great development foundation that will vastly simplify the Datadog custom check development process.

.svg)