A question that keeps surfacing in boardrooms, risk committees, and IT leadership meetings: Who's actually in charge of our AI?

Most organizations can't answer it, and the gap between the speed of AI adoption and the maturity of AI governance is where real organizational risk lives. Not the sci-fi kind. The kind that shows up as a regulatory audit, a discriminatory hiring decision, a runaway API bill, or a customer service model quietly degrading because no one noticed.

The Stakes Are Real, and Regulations Have Teeth

Governance conversations around AI aren't abstract anymore. The EU AI Act (Regulation 2024/1689), now in force, establishes binding obligations on organizations deploying AI systems - including requirements around transparency, human oversight, documentation, and risk classification. Non-compliance isn't an academic concern; it carries real legal exposure.

Closer to home, Canada's Federal AI Strategy for 2025–2027 articulates a clear mandate: AI systems must be "appropriately governed, with guidance, policy, and training in place to manage risk, address challenges, and uphold human rights, public trust, and national security." The Government of Canada is explicit that AI governance applies to the entire lifecycle, from design through decommissioning, and to any AI in use, whether built in-house, commercial off-the-shelf, or sourced from a vendor.

The NIST AI Risk Management Framework organizes its governance layer around four functions: Govern, Map, Measure, and Manage. It has become a practical blueprint for organizations looking to operationalize responsible AI. Its GOVERN function alone covers over a dozen sub-categories, from understanding legal and regulatory obligations to ensuring that roles, responsibilities, and accountability structures are defined and documented. The playbook is detailed. Most organizations don't have the infrastructure to execute it.

That's the problem ServiceNow's AI Control Tower is built to solve.

Four Risks You're Probably Already Living With

If your organization has deployed AI through a ServiceNow workflow, a third-party tool, a custom model, or a vendor integration, there's a reasonable chance you're already exposed to at least one of these:

1. Confidential data leakage

Employees using public AI tools or integrated models may inadvertently submit client data, source code, or financial information, or other confidential information. Many models log inputs and use them for further training (including RAG). This can include data like text or document chunks, structured data (i.e., tables, spreadsheets), or even code. And once the data leaves your network, there's no recall button.

2. Uncontrolled costs

AI usage at scale generates significant API and compute costs. Without central visibility, departments can accumulate thousands in cloud AI spend with no oversight. Token-based pricing means a single poorly-designed workflow can trigger a massive bill.

3. Infrastructure and vendor risk

AI systems depend on third-party APIs, cloud vendors, and specific model versions. Workflows built on a specific model break when that model is deprecated or updated. No governance means no vendor lock-in strategy, no SLA enforcement, no continuity plan.

4. Bias, accuracy, and legal liability

AI outputs can reflect training data biases, leading to discriminatory decisions in hiring, lending, or customer service. Relying on AI for regulated decisions without human oversight creates legal exposure under existing employment, human rights, and privacy laws.

What the AI Control Tower Actually Does

ServiceNow's AI Control Tower is the enterprise answer to all of the above. Built natively on the Now Platform and underpinned by the CMDB and Common Services Data Model (CSDM), it provides a centralized governance and oversight layer for every AI asset across your organization, whether internally built, third-party sourced, or agent-driven.

Think of it as mission control for your enterprise AI portfolio. Here's what that looks like in practice:

- AI Discovery and Inventory

Control Tower maintains a complete registry of all AI models deployed across your instance, including who built them, where they're used, what data they touch, and what risks they carry. Shadow AI, or models operating outside IT's visibility, is surfaced and brought under management.

- Risk and Compliance Management

AI assets are mapped to regulatory frameworks, including NIST AI RMF, the EU AI Act, GDPR, and others. Control Tower gives compliance and risk leaders real-time insight into where governance controls are in place, where they're missing, and where exposure is growing.

- Drift Detection and Performance Monitoring

Control Tower continuously monitors AI performance metrics and alerts teams when model behavior drifts from baseline due to stale training data, shifting inputs, or business changes. When a model fails, it can trigger automated workflows to respond, such as deactivating the model and routing work manually until retraining is complete.

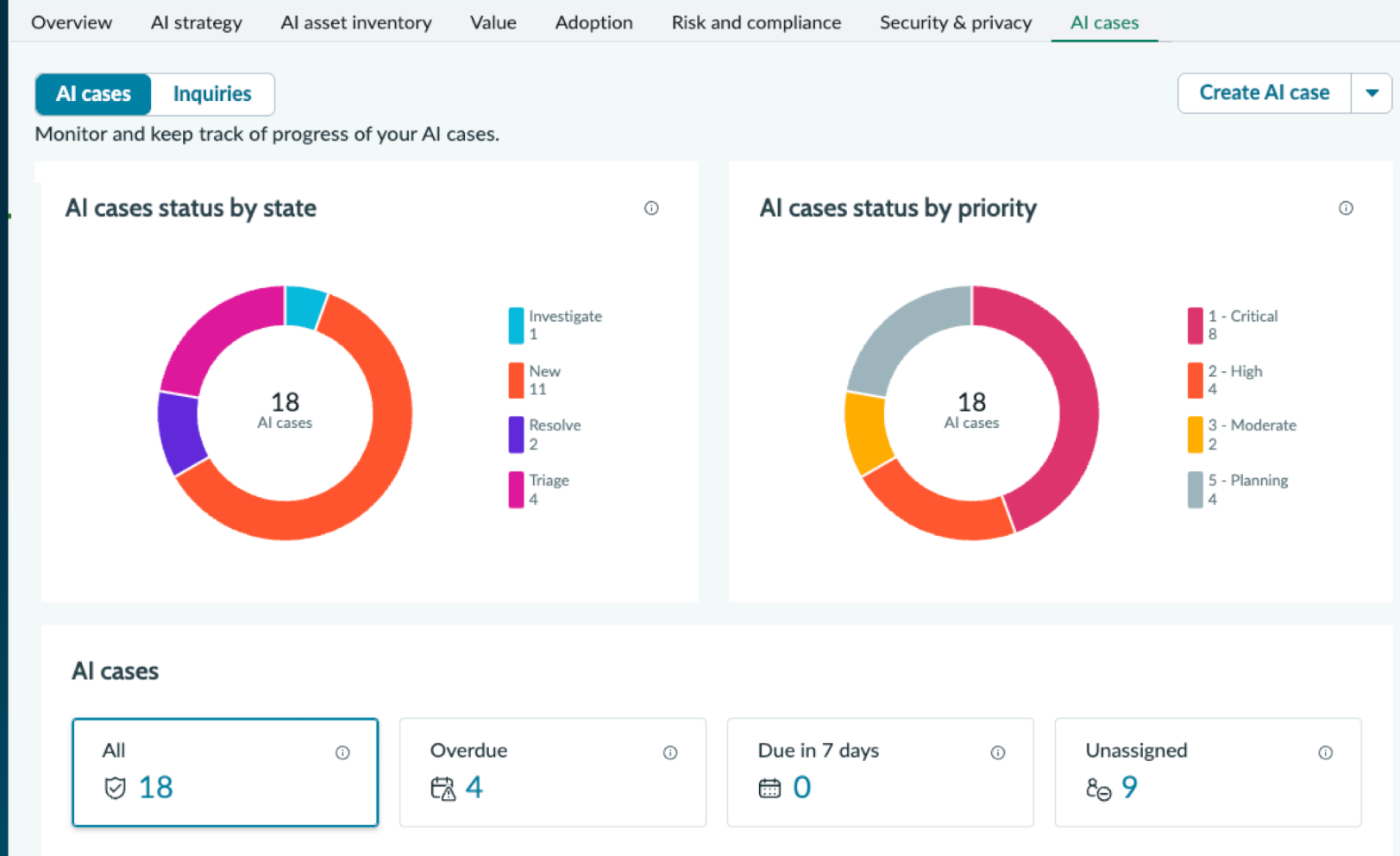

- AI Case Management and Lifecycle Governance

From project inception through retirement, Control Tower streamlines the AI lifecycle with automated workflows and defined roles. It connects AI initiatives to enterprise strategy, prioritizing projects that deliver measurable business value and helping IT teams manage any AI deployment consistently and efficiently.

- Explainability

When an AI model makes a decision, routing an incident, approving an access request, flagging a case for escalation — Control Tower can surface why that decision was made. This is not a nice-to-have. It's foundational for regulated industries and a core requirement of both NIST and the EU AI Act.

Governance as a Competitive Advantage

There's a tendency to frame AI governance as a compliance burden, something you do because you have to, not because it makes the AI better. That framing is wrong, and organizations that internalize this early will have a genuine advantage.

Governance done well means your AI investments are aligned with business outcomes you can measure. It means you can scale AI confidently — deploying new agents, new models, new workflows — because the infrastructure for oversight is already in place. It means you can answer the hard question: Can you show me that this AI system is behaving fairly, accurately, and in compliance with applicable law?

Whether you’re early in your AI journey or already deploying at scale, governance becomes the foundation. RapDev works with teams to turn frameworks into real, working systems — let’s talk about where you are today.